The rapid rise of artificial intelligence is reshaping the global economy, but also reshaping the electric grid in ways few system planners anticipate. AI training hyperscalers, operating at hundreds of megawatts per campus, are emerging as a new class of load with characteristics that challenge traditional grid planning, interconnection processes, and reliability frameworks.

Standard data centers support traditional cloud computing, enterprise IT, and web services, and exhibit relatively stable, predictable load profiles with gradual daily variation.

AI inference data centers run deployed models in real time, serving search queries, recommendations, language responses, or vision processing, and while their aggregate demand can be large, their power profiles are generally smoother and more distributed because workloads scale with user activity.

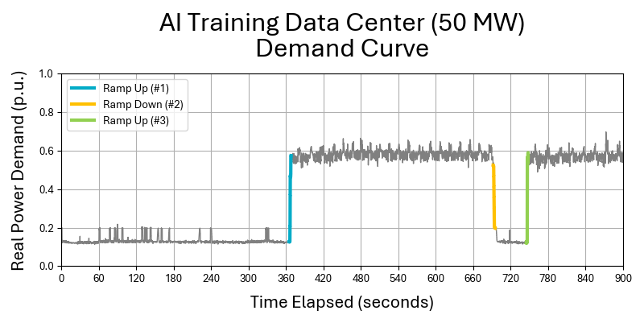

In contrast, AI training data centers (ie: OpenAI and Antropic) are the most demanding and operationally dynamic: they operate massive, tightly synchronized GPU clusters that execute long-duration training runs with high-power density and rapid sub-second load swings as compute and checkpoint (saving model weights) phases alternate. Training facilities behave more like industrial process plants than conventional IT loads, creating sharp ramp rates, high reactive power variability, and greater grid integration challenges than either standard or inference-focused facilities.

transitions. These events resemble generator trips in reverse, sudden, repeated step changes in load that strain frequency control, voltage stability, and spinning reserves.

The challenge is not simply how much electricity AI consumes. It is how it consumes it.

Fig 1: An AI Training Data Center Begins a Training Run (EdgeTunePower)[1]

[1] NERC White Paper p.13

The Hyperscale Load Profile Problem

AI training campuses often range from 100 MW to over 300 MW at a single interconnection point. Growth projections suggest that AI-dedicated data center capacity could expand at more than 40% CAGR over the next decade. Even if total AI demand remains a small share of overall U.S. capacity, its geographic concentration and dynamic behavior produce outsized system impacts.

Key characteristics include:

- Low load diversity – Tens of thousands of GPUs operating in synchronized training cycles.

- Sub-second ramping – Rapid transitions between compute and checkpoint phases.

- High reactive power swings – Voltage fluctuations beyond the corrective response window of conventional generation.

- Behind-the-meter (BTM) complexity – Co-located generation and storage switching rapidly between grid and islanded modes.

These patterns disrupt long-standing planning assumptions based on smoother commercial and industrial demand curves. Traditional load forecasting models, designed around hourly averages, cannot capture millisecond-scale transients.

Why Traditional Grid Architecture Struggles

Most bulk power systems were built around predictable generation and relatively gradual load changes. Mechanical generators regulate frequency over seconds, not milliseconds. Transmission upgrades take years. Interconnection studies often assume static load blocks, not high-frequency oscillations.

As a result, hyperscaler projects increasingly face:

- Multi-year interconnection queues.

- Requirements for on-site firm generation.

- Mandated grid stabilization measures.

- Costly substation and feeder upgrades.

Regulators and reliability authorities have begun scrutinizing these projects more closely, particularly in markets such as ERCOT and PJM. The tension is clear: digital infrastructure is scaling exponentially, while grid infrastructure evolves linearly.

The Case for a New Power System Architecture

The integration of AI hyperscalers requires a shift from static infrastructure expansion to dynamic, grid-interactive architecture.

This architecture includes three core elements:

- Fast-response power conditioning at the edge.

- High C-rate energy storage to buffer ramp events.

- Integrated telemetry and coordinated control between BTM assets and system operators.

Instead of viewing data centers as passive loads, they must become active participants in grid stability.

High C-Rate Energy Storage as a Shock Absorber

Not all battery energy storage systems (BESS) are suited for AI applications. The critical metric is not just energy capacity (MWh), but power capability (MW), the speed at which a battery can charge and discharge relative to its capacity.

Conventional lithium iron phosphate (LFP) systems typically operate at 0.5C to 1C for long-duration discharge. That is well-suited for four-hour energy shifting, but insufficient for repeated sub-second ramp management.

AI training facilities require:

- High instantaneous discharge rates (5C or greater in some applications).

- Ultra-fast response times (millisecond-scale).

- Extremely high cycle life (tens of thousands of shallow cycles).

- Minimal degradation under frequent power swings.

Lithium-titanate oxide (LTO) chemistries are particularly well positioned for this role. Their unique anode structure enables rapid charge and discharge with minimal lithium plating, supporting 20,000+ cycles in high-duty applications. Other high-power chemistries and hybrid supercapacitor architectures may also play a role.

In this configuration, storage functions less like a long-duration energy reservoir and more like a power conditioning system, a high-speed electrical buffer between GPU clusters and the grid.

Functional Benefits in AI Campuses

A properly engineered high C-rate BESS can:

- Smooth sub-second ramp events, reducing effective ramp rates from >1.5 p.u./sec to manageable levels.

- Stabilize voltage and frequency at the point of common coupling.

- Limit peak exposure, reducing required transformer and feeder upgrades.

- Mitigate harmonic distortion, protecting sensitive power electronics.

- Enable flexible interconnection agreements, accelerating project approvals.

In essence, the battery becomes an intelligent interface, absorbing volatility before it propagates upstream.

Behind-the-Meter Risk and Visibility

The growth of BTM configurations introduces new system-level uncertainty. AI campuses increasingly combine:

- On-site natural gas generation.

- Renewable assets.

- High-power storage.

- Dynamic compute loads.

Without coordinated telemetry and ride-through standards, abrupt transitions between on-site generation and grid supply can produce multi-megawatt step changes.

Future interconnection standards must incorporate:

- Real-time visibility into BTM assets at the Point of Common Coupling.

- Coordinated protection settings.

- Mandatory fast-response buffering capability.

This represents not just a technical shift, but a regulatory one.

Economic Implications

Although high C-rate systems may have higher upfront $/kWh costs than long-duration storage, their economics must be evaluated differently:

- Avoided downtime in AI training environments where computational runs can cost millions.

- Deferred infrastructure CAPEX from reduced grid upgrade requirements.

- Participation in ancillary markets, including fast frequency response.

- Extended equipment life through reduced electrical stress.

In high-duty AI environments, lifecycle cost per cycle can fall below $0.15 per kWh-cycle, with asset life extending 15–20 years under heavy cycling.

From Grid Stressor to Grid Asset

AI hyperscalers are often portrayed as grid stressors. With appropriate architecture, they can instead become stabilizing anchors for modernization.

By embedding high C-rate storage and advanced control systems:

- AI campuses can provide fast frequency response.

- They can absorb renewable variability.

- They can support voltage regulation in constrained regions.

- They can serve as flexible demand resources.

Rather than waiting for transmission expansion, hyperscalers can deploy localized power intelligence.

Conclusion: Designing for Power Intelligence

The next era of digital infrastructure will be defined not just by compute density, but by power intelligence.

AI training hyperscalers introduce a new electrical paradigm, large, fast, and dynamic loads that cannot be integrated through conventional planning alone. The solution is not simply more wires and bigger substations. It is a new architecture that blends high C-rate energy storage, advanced power electronics, and coordinated grid interaction. If hyperscalers are to scale sustainably, reliability and flexibility must be engineered at the edge.

The question is no longer whether AI will reshape the grid. It is whether the grid will evolve fast enough to support AI.